I ran four experiments against the Anthropic API to understand prompt caching. Not the concept, the actual behavior. What gets cached, when it breaks, how the numbers look in practice.

Thariq Shihipar, an engineer on the Claude Code team, wrote that prompt caching is the architectural constraint around which the entire product is built. They declare SEVs when cache hit rates drop. I wanted to see the numbers myself.

This matters for two reasons:

Cost. Without prompt caching, a long Opus coding session (100 turns with compaction cycles) can cost $50-100 in input tokens. With it, $10-19. This is why Claude Code Pro at $20/month is economically viable.

Things you do can break it. Adding an MCP tool, putting a timestamp in your system prompt, switching models mid-session — each of these can invalidate the entire cache and 5x your costs for that turn. Understanding the mechanism tells you what to avoid.

What prompt caching actually is

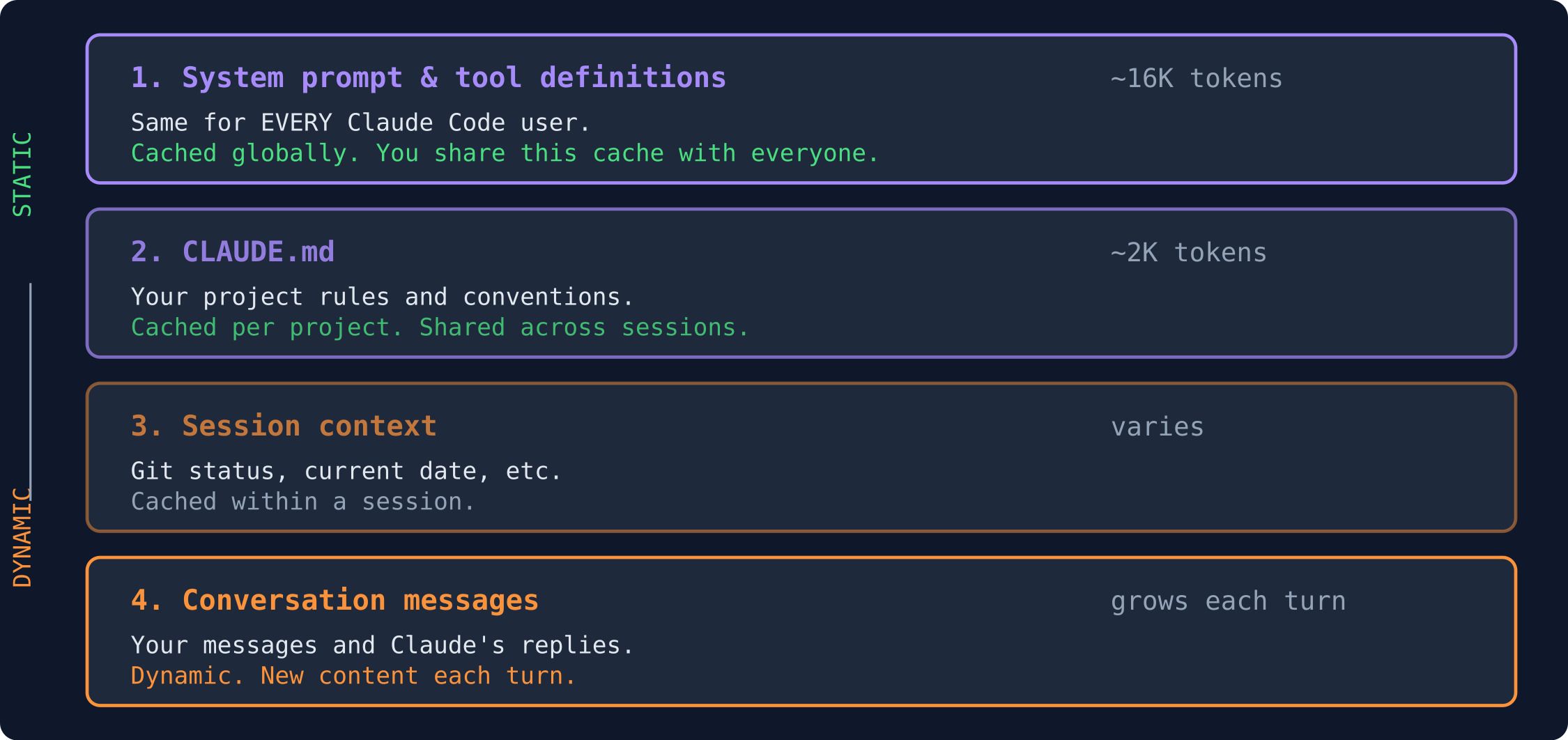

Every time you send a message in Claude Code, the entire conversation gets re-sent to the API. Your first message sends the system prompt, tool definitions, CLAUDE.md, and your message. Your tenth message sends all of that plus the previous nine exchanges. Your fiftieth message sends everything from the beginning plus 49 rounds of conversation.

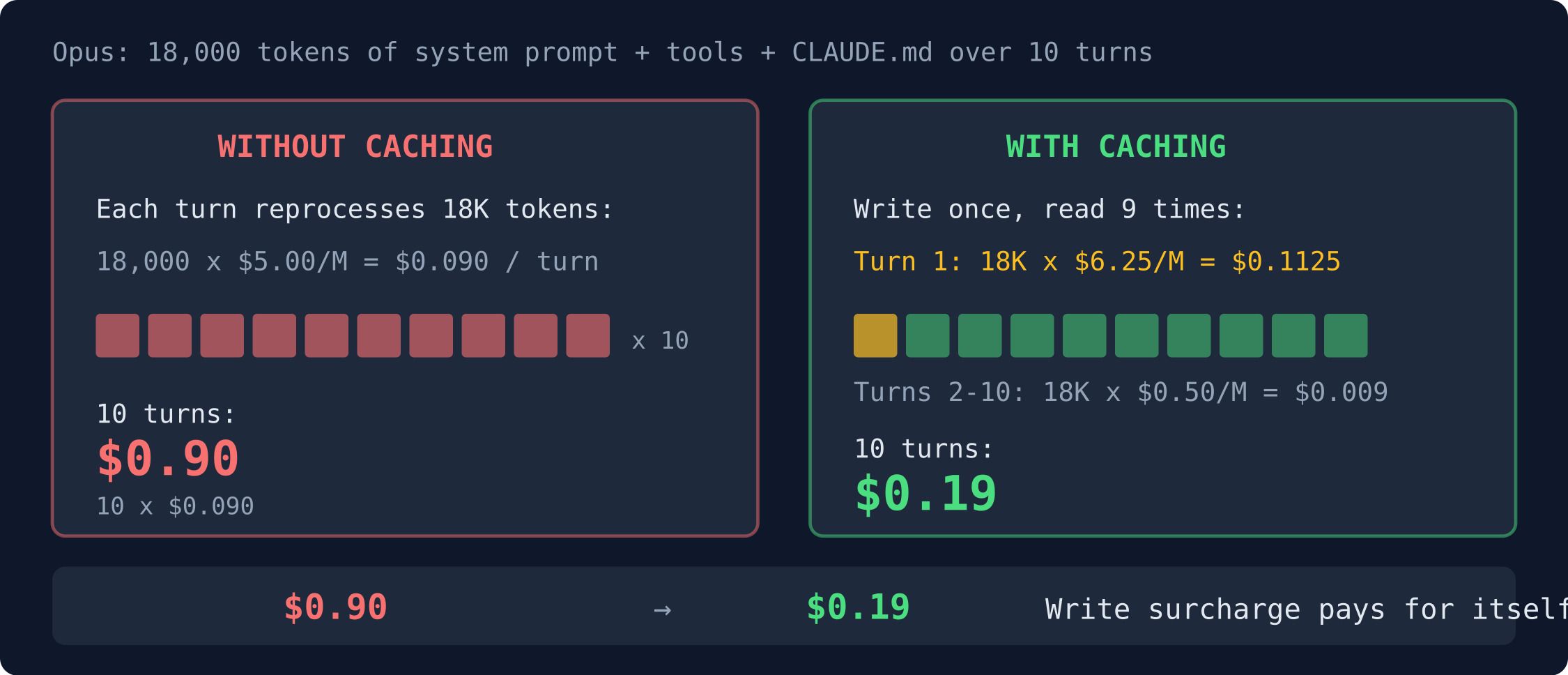

Without caching, the model reprocesses every token from scratch each time. At $5 per million tokens on Opus, even 10 million tokens costs $50 for one coding session. At 20 million (a heavy session with multiple compaction cycles), that's $100.

Prompt caching stores the computation from processing previous tokens. When your next request starts with the same prefix, the model skips recomputing what it's already seen. Those cached reads cost 10% of the normal input price on Anthropic — $0.50 per million instead of $5.

With a 90% cache hit rate, that $100 session costs about $19.

Free Claude Code crash course

60-min video lesson + CLAUDE.md starter kit. Yours when you subscribe.

Why the prefix matters

The cache matches from the beginning of your request forward. Think of it like autocomplete — the API checks how much of your new request matches what it already processed.

If any byte changes in that prefix, the cache misses and everything gets recomputed. I'll show you exactly what that looks like in the experiments below.

Why this works at all

Think of it like grading an essay. A student hands you a 10-page paper. You read all 10 pages, making margin notes as you go — circling key points, drawing connections between paragraphs. Then you give feedback on the last section.

The student revises the last paragraph and hands it back. Without caching, you throw away all your margin notes and re-read from page 1. With caching, you keep your notes, flip to where you left off, and just read the new paragraph.

That's prompt caching. The "margin notes" are what engineers call the KV cache — the intermediate computation the model builds up as it reads through your prompt. Providers store that computation so the model doesn't have to redo it.

Here's the technical version. When a transformer reads a token, it runs that token through an attention step. During attention, each token produces three vectors: a Query (what am I looking for?), a Key (what do I contain?), and a Value (what information do I carry?). To process a new token, the model computes its Query, compares it against the Keys of all previous tokens to figure out which ones are relevant, then takes a weighted sum of the corresponding Values. That weighted sum becomes part of the new token's representation.

The KV cache is the stored Key and Value vectors for every token the model has already processed. When the cache is warm, a new token can immediately look up all previous Keys and Values without recomputing them. Without the cache, the model has to regenerate every previous token's K and V from scratch — running the full attention computation across the entire prompt.

The important part: this computation is autoregressive — each token's Key and Value depend on all tokens before it, but never on tokens after it. Token 500's attention output is influenced by tokens 1-499, but not by token 501. This is what makes prefix caching possible. If tokens 1-499 haven't changed, their Keys and Values are still correct, even if token 500 is different.

But if token 200 changes, the Keys and Values for tokens 201-499 are all wrong — because each of them was computed with token 200 as an input. You have to recompute from token 200 onward.

That's why it's called prefix caching. The cache works from the beginning forward. Change anything in the prefix, and everything after it gets invalidated. My Experiment 2 below shows exactly what happens when you change two letters — 2,727 tokens of stored computation become useless.

If you want to see the actual matrix math behind this — how the model builds those Key and Value vectors step by step — Sam Rose's ngrok post is the best visual walkthrough I've found.

One thing people get wrong: caching doesn't return a "cached answer." The stored computation is intermediate work (the K and V matrices), not output. Temperature, sampling, and other randomness settings happen after the model finishes processing. Cached prompts produce the same diversity of responses as uncached ones. The model still generates its response token by token with all the usual randomness. It just doesn't have to re-read the prompt to do it.

What's actually stored on the server

The cache doesn't store your prompt text, and it doesn't store a hash. What Anthropic's servers keep is the KV cache — key and value matrices that the model computes during the attention step as it reads through your prompt. These are large numerical tensors that live in GPU memory (VRAM) on Anthropic's infrastructure.

When you send a request, the server hashes your prompt prefix to look up whether a matching KV cache exists. If it does, those tensors are loaded directly into the model's attention layers — the model picks up reading where the cached prefix ends, as if it had just finished reading all those tokens itself. No recomputation needed.

To give you a sense of scale: each token produces a Key vector and a Value vector at every attention layer. For a model like Opus, that's hundreds of layers, each producing vectors of thousands of dimensions. A back-of-the-envelope estimate: a 100K-token prompt might produce a KV cache of 500MB-1GB per request. Anthropic is storing and retrieving this data in GPU memory for millions of concurrent users simultaneously.

That's why there's a 25% surcharge on cache writes — you're paying for VRAM allocation, not just compute. And it's why there's a minimum token threshold to trigger caching: 1,024 tokens for Sonnet and Haiku, up to 2,048-4,096 for Opus. Below that threshold, the overhead of storing and looking up the cache isn't worth it. Claude Code's system prompt alone is ~4,000 tokens, so it always clears the minimum.

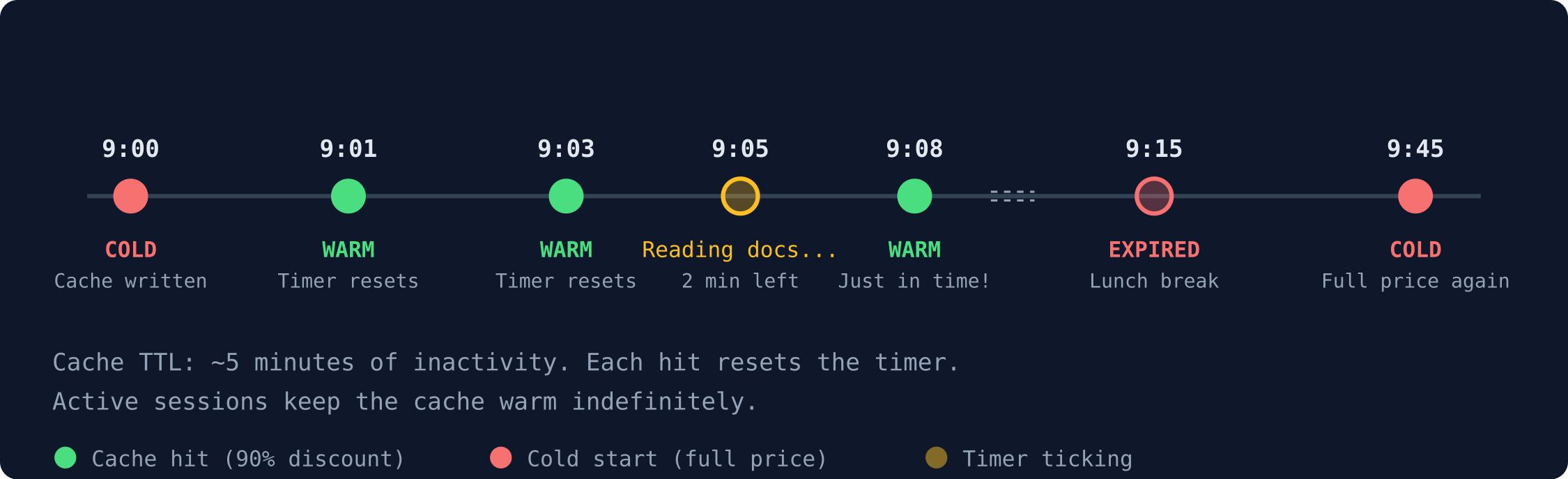

Cache lifetime (TTL)

Anthropic's cache expires after roughly 5 minutes of inactivity. Each cache hit resets the timer. So an active coding session — where you're sending messages every minute or two — keeps the cache warm indefinitely. The cache stays alive as long as you keep using it.

But stop typing for 5 minutes and the cache evaporates. Your next request hits cold: the server processes everything from scratch (a cache write), and then subsequent requests in that session hit the cache again.

In my Experiment 2, I accidentally demonstrated this. Request 1 got a surprise cache hit from Experiment 1's data, which I'd run just seconds earlier. The TTL kept it alive across separate API calls. If I'd waited 6 minutes between experiments, that hit would have been a miss.

Anthropic also offers an extended TTL option (1 hour) for use cases that need longer persistence.

The experiments

I wrote a script that makes real API calls and shows you cache behavior through the usage fields in the response. Here's what I found.

Experiment 1: seeing it work

Two requests with the same 2,700-token system prompt. First should write the cache, second should read from it.

The raw usage fields from the API response:

Request 1 (cold start):

response.usage = {

"input_tokens": 2,854,

"cache_creation_input_tokens": 2,727, ← wrote to cache

"cache_read_input_tokens": 0, ← nothing to read yet

"output_tokens": 89

}

Request 2 (warm):

response.usage = {

"input_tokens": 127, ← only new tokens processed

"cache_creation_input_tokens": 0, ← nothing new to write

"cache_read_input_tokens": 2,727, ← read everything from cache

"output_tokens": 103

}Request 1 processed everything fresh and wrote 2,727 tokens to cache. Request 2 read all 2,727 back and only processed 127 new tokens — the conversation turns I added. 95.6% of the input was free.

The fields to watch: cache_creation_input_tokens (tokens written to cache) and cache_read_input_tokens (tokens read from cache). If you're building on the API, check these after every call.

Experiment 2: breaking it

Three requests. Same system prompt for the first two, then one with "senior software engineer" changed to "Senior Software Engineer." Two capital letters.

Request 2 (same prefix): cache_read_input_tokens: 2,727 → HIT

Request 3 (changed prefix): cache_read_input_tokens: 0 → MISS

→ Changing capitalization of TWO letters broke the entire cache.2,727 tokens of computation, thrown away because of two capital letters. The model had to reprocess everything from scratch.

This matches a story from HN. A user named duggan found they were busting their cache on every request because they included a timestamp near the top of their system prompt. Moving it to the bottom recovered 20+ percentage points of cache hit rate.

A bonus finding: Request 1 in this experiment actually got a cache hit from Experiment 1, which I'd run seconds earlier. The 5-minute TTL means caches persist across separate API calls. I hadn't expected that to be visible, but there it was.

Experiment 3: multi-turn conversation

A 5-turn conversation simulating a coding session. The system prompt gets cached on turn 1, and each subsequent turn adds more conversation history.

The system prompt (2,727 tokens) was cached once and reused every turn. The hit rate decreases over time because conversation history grows while the cached portion stays fixed.

Why my experiment only hit 90% while real Claude Code sessions hit 96%: I only placed a cache breakpoint on the system prompt. Everything after it — the conversation messages were processed fresh every turn. Claude Code places breakpoints more aggressively. It caches the system prompt, the tool definitions, the CLAUDE.md, and the conversation history up to the most recent messages. With auto-caching, the breakpoint slides forward each turn to include the latest assistant response.

The difference looks like this:

What the Claude Code team does about it

Thariq's thread lays out seven lessons from building Claude Code around prompt caching. These are architectural decisions, not tips.

1. Order your prompt for caching.

Static content first, dynamic content last. Claude Code's prompt layout:

All Claude Code users share the same system prompt cache. Everyone in the same project shares the CLAUDE.md cache. Only the conversation is unique per session.

2. Use messages, not prompt edits.

When information becomes stale — the date changes, a file gets modified — don't update the system prompt. That would break the cache. Instead, send the updated information as a <system-reminder> tag in the next user message.

If you've used Claude Code, you've seen these. They show up in user messages like this:

{

"role": "user",

"content": "<system-reminder>\n# currentDate\nToday's date is 2026-02-24.\n</system-reminder>\n\nFix the login bug"

}The system prompt stays frozen. The date goes in the message. The prefix stays intact. This is why <system-reminder> tags exist in Claude Code. They're not a quirk. They're a caching strategy.

The same pattern applies to git status, open file contents, and any other context that changes between turns. All of it goes in messages, never in the system prompt.

3. Don't switch models mid-session.

Caches are per-model. Opus and Haiku have separate caches even if the prompt is identical — the KV cache is computed differently by each model's architecture. If you're 100K tokens into an Opus conversation and switch to Haiku for a "simple question," Haiku has to build its own cache from scratch. That cold-start write often costs more than just asking Opus.

Claude Code handles this with subagents. When it needs Haiku for a quick search (the Explore agent), it spawns a separate process with its own smaller context window and its own independent cache. The parent Opus session's cache is untouched.

4. Never add or remove tools mid-session.

Tool definitions are part of the cached prefix — they sit between the system prompt and the conversation messages. Adding one MCP tool changes the prefix, which invalidates the cache for the entire conversation history. Every message you sent before? The model has to reprocess all of it.

This is why Claude Code doesn't let MCP servers register new tools after the session starts. The tool list is locked at startup.

5. Design features around the cache.

When they built plan mode, the obvious design was to swap in read-only tools (remove Edit, remove Bash, add PlanMode tool). That would change the tool definitions and break the cache.

The cache-aware design: keep all tools in the prompt at all times, add EnterPlanMode and ExitPlanMode as two additional tools, and send plan mode instructions as a user message. The tool definitions never change between plan mode and normal mode. The only difference is a message saying "you're in plan mode, don't write files."

Bonus: because plan mode is just tools + messages, the model can enter plan mode on its own by calling EnterPlanMode. No cache cost.

6. Defer tools instead of removing them.

Claude Code has access to dozens of MCP tools that you might not use in any given session. The naive approach: only include tools the user needs. But which tools you include changes the prefix, and different users need different tools, so the cache can't be shared.

The cache-aware approach: include every tool every time, but send most of them as lightweight stubs:

{

"name": "mcp__slack__read_channel",

"description": "Read messages from a Slack channel",

"defer_loading": true

}The full JSON schema (parameters, types, descriptions) isn't included. Just the name, a one-line description, and a flag saying "load me later." When the model actually needs the tool, it calls ToolSearch to fetch the full schema, which gets appended as a message — not as a tool definition change.

The cached prefix stays identical for every user regardless of which MCP servers they have configured. The stubs are tiny (a few tokens each), so they barely affect the prefix size. The full schemas only load on demand, in messages.

When Claude Code compacts a long conversation, it uses the identical system prompt, tools, and message history as the parent session, then appends the compaction prompt as a new user message. The cached prefix is reused for the compaction request.

Compaction and caching

This seventh lesson deserves its own explanation because it answers a question I had: what happens to the cache when Claude Code compacts your conversation?

Compaction happens when your context window fills up. Claude Code summarizes your conversation history into a shorter version so you can keep working. The naive concern is that rewriting the conversation would break the cache — after all, the messages changed.

Here's why it doesn't:

The compaction request uses the identical prefix as your current session: same system prompt, same tool definitions, same CLAUDE.md. Only the conversation portion changes — old messages get replaced by a summary, and Claude Code appends a compaction instruction ("summarize this conversation") as a new user message.

Since the prefix (system + tools + CLAUDE.md) is identical, the KV cache for those ~18K tokens is reused. The compaction request only needs to process the conversation summary and the compaction instruction as fresh tokens. After compaction finishes, you're left with a smaller context window that still has a warm cache for its prefix.

This is what Thariq means by "fork operations." Compaction is architecturally a fork of the parent session: it inherits the same cached prefix, diverges only in the messages. The design is deliberate. They could have rebuilt the prompt differently for compaction — a shorter system prompt, fewer tools, just what's needed for summarization. Instead, they use the identical prefix so the KV cache is reused.

The cost impact is real. Without prefix reuse, a compaction request for a 200K-token conversation would process ~18K tokens of system prompt + tools from scratch ($0.09 on Opus). With prefix reuse, those 18K tokens come from cache ($0.009). Over hundreds of compactions across all Claude Code users, the savings add up.

There's a side effect worth knowing: after compaction, your first message in the new shorter context still has a warm cache for the prefix. You don't pay a cold-start cost. If you /clear instead, the prefix cache might still be warm from TTL, but the conversation history is gone. If you wait more than 5 minutes after /clear, the prefix cache expires too, and your next turn pays full price for the prefix write. This is why compaction is the default behavior — it preserves more cache state than a manual reset.

Cache breakpoints and auto-caching

What a breakpoint is

A cache breakpoint is a marker you place on a content block in your API request telling Anthropic's servers: "cache everything from the start of the request up to this point." You do this by adding "cache_control": {"type": "ephemeral"} to a content block.

You get up to four breakpoints per request. Each one creates a potential cache boundary. When a subsequent request matches the prefix up to a breakpoint, the KV cache for that portion is loaded instead of recomputed. The "ephemeral" type means the cache uses the standard ~5 minute TTL.

Cache writes (creating the KV cache) cost 25% more than normal input. Cache reads cost 90% less. The math works out in your favor fast:

For any conversation longer than two turns, the read savings overwhelm the write cost.

Auto-caching

Anthropic now supports automatic caching. Instead of manually placing breakpoints, you add one field:

{

"model": "claude-opus-4-6",

"cache_control": {"type": "ephemeral"},

"system": "...",

"messages": [...]

}The system automatically caches the last cacheable block and slides the breakpoint forward as your conversation grows. You can combine it with explicit breakpoints if you want finer control — auto-caching uses one of your four available slots.

If you're using the block-level syntax (which works on all SDK versions), it looks like this:

system_blocks = [

{"type": "text", "text": your_system_prompt, "cache_control": {"type": "ephemeral"}}

]

response = client.messages.create(

model="claude-sonnet-4-6",

max_tokens=100,

system=system_blocks,

messages=[{"role": "user", "content": "Hello"}]

)

print(response.usage.cache_read_input_tokens) # tokens read from cache

print(response.usage.cache_creation_input_tokens) # tokens written to cacheThe practical takeaways

Don't put timestamps or changing data at the top of your system prompt. Put it at the bottom, or better, in a user message.

Don't add or remove tools mid-session. This includes MCP tools.

Don't switch models mid-session. Use subagents if you need a different model.

If you build on the API, log

cache_read_input_tokensandcache_creation_input_tokensafter every call. Treat a zero cache read on an expected hit the same way you'd treat a failed health check.