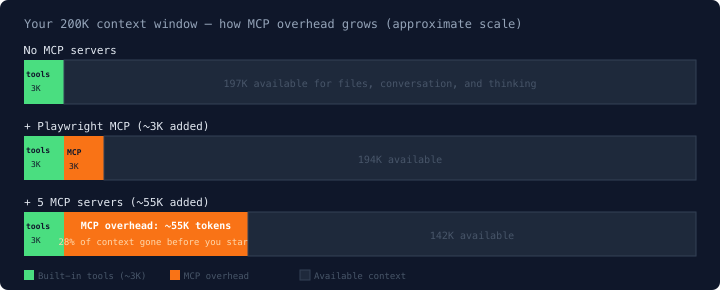

MCP connects Claude Code to external tools: browsers, databases, internal APIs, or anything that has an MCP server. Each server adds token overhead to every turn, whether you use it or not. Five servers can consume 55,000 tokens (28% of your 200K context) before you type a single character.

This guide covers what MCP is, when it's worth using, and how to build your own.

What is MCP?

MCP (Model Context Protocol) is an open protocol that Anthropic released in November 2024. It standardizes how AI tools connect to external services.

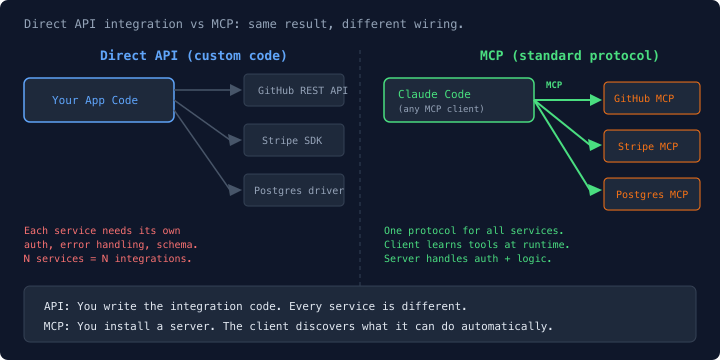

Without MCP, connecting an AI tool to an external service means writing custom integration code for that specific API. GitHub has a REST API, Stripe has an SDK, Postgres has a driver. Each one has its own auth flow, error handling, and data format. Five services means five integrations.

MCP replaces that with a single protocol. An MCP server wraps a service and exposes it through a standard interface. The client (Claude Code, Cursor, Windsurf) doesn't need to know anything about the underlying service. It connects to the MCP server, discovers what tools are available, and uses them.

An MCP server exposes tools: functions the AI can call. A Playwright server exposes browser_navigate, browser_click, browser_screenshot. A database server exposes run_query and list_tables. Each tool has a name, description, and a JSON schema defining its parameters.

The MCP spec also defines resources (data the AI can read) and prompts (templates for common tasks), but Claude Code currently only uses tools. If you expose resources or prompts from your MCP server, Claude Code won't pick them up.

A server you build for Claude Code also works in Cursor, Windsurf, or any other MCP client. That's the point of having a standard.

Free Claude Code crash course

60-min video lesson + CLAUDE.md starter kit. Yours when you subscribe.

How MCP works in Claude Code

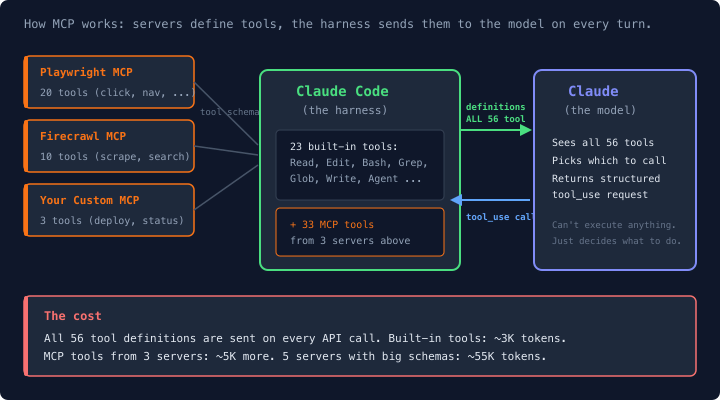

Every tool Claude Code can use (Read, Edit, Bash, Grep, and about 20 others) is defined as a JSON schema sent to the model on every API call. The model sees these definitions and decides which tool to call. MCP servers add more tools to that list.

When you add a Playwright MCP server, Claude Code gains about 20 new tools. Each one gets sent to the model on every single turn, alongside all the built-in tools. The model doesn't connect to the MCP server directly. Claude Code sits in the middle: it tells the model what tools exist, the model picks which one to call, Claude Code routes the call to the right server, gets the result, and feeds it back.

MCP tools go through the same permission system as built-in tools. Claude Code will prompt you to allow or deny MCP tool calls, and you can allowlist specific tools in your settings. It won't run arbitrary MCP tools without asking.

The token cost

Every tool Claude Code can use gets sent to the model as a JSON schema on each API call. Here's what one Playwright tool definition looks like:

{

"name": "browser_navigate",

"description": "Navigate to a URL. Waits for page load before returning.",

"input_schema": {

"type": "object",

"properties": {

"url": {

"type": "string",

"description": "URL to navigate to"

}

},

"required": ["url"]

}

}That's about 60 tokens. A more complex tool with several parameters runs 150-200. The Playwright MCP server has roughly 20 tools, which puts it around 2,000-3,000 tokens.

Claude Code's ~20 built-in tools (Read, Edit, Bash, Grep, and the rest) already run about 3,000 tokens of overhead on every API call. Add one MCP server and you've doubled that. Add five and it gets expensive fast.

Thariq Shihipar, a Claude Code engineer, estimated that a typical setup with 5 MCP servers adds ~55,000 tokens of tool definitions. The exact number depends on which servers you use and how many tools each exposes. GitHub MCP alone runs ~26,000 tokens because it exposes 50+ tools with detailed schemas.

On Opus at $15 per million input tokens, 55K extra tokens per turn adds roughly $0.80 per turn in a fresh session. With prompt caching (which hits ~90% of the time in normal use), that drops to about $0.08 per turn. It adds up across a long session, and it permanently reduces the space available for files, conversation, and thinking.

How Claude Code manages this: Tool Search

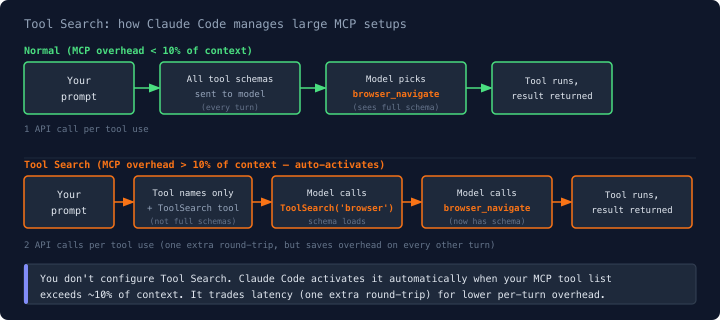

When your MCP tool definitions get large (more than 10% of context), Claude Code stops sending full schemas to the model. Instead, it lists just the tool names and gives the model a ToolSearch tool. The model keyword-searches or directly selects a tool by name, which loads the full schema. Then it can call the actual tool on a follow-up call.

This adds one extra round-trip per MCP tool use, but cuts the per-turn overhead significantly. It kicks in automatically. You don't configure it.

When MCP is worth it (and when it's not)

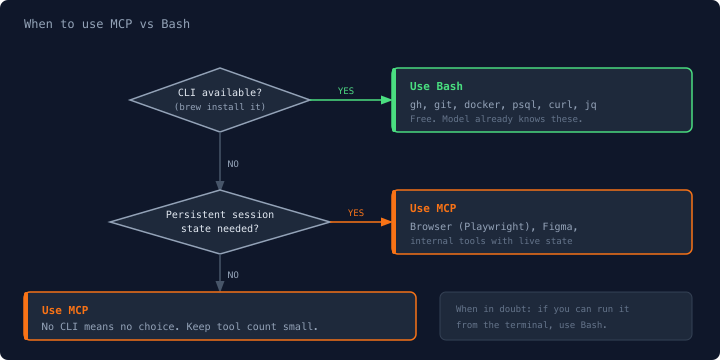

Use MCP when no CLI exists, or when session state matters

The clearest case is browser automation. There's no CLI equivalent for navigating a real browser, and browser state (DOM, cookies, login session) needs to survive between tool calls. If you ran Playwright via Bash scripts, you'd either write one monolithic script — losing Claude's ability to react to results mid-flow — or restart the browser on every call and lose your session. The MCP keeps the browser alive so each tool call picks up exactly where the last one left off.

Figma is the same: no CLI, and the design canvas has session state. Internal APIs your team controls are another good fit, especially if they have no CLI wrapper.

For everything else, gh, git, docker, psql - the CLI already handles multi-step sequences fine. Claude calls one command, reads the output, calls the next. No persistent state needed between calls.

Skip MCP when a CLI exists

This is Thariq's "Bash Is All You Need" principle. The gh CLI for GitHub operations is already available through the built-in Bash tool. The model already knows how to use gh. A GitHub MCP server adds ~26,000 tokens of tool definitions for the same capabilities.

The same applies to git, docker, kubectl, psql, curl, jq. Any CLI the model already knows works through Bash at no extra token cost beyond the command and its output. If you can brew install it, skip the MCP server.

Eric Holmes made this argument in "MCP is dead. Long live the CLI" and he's right for these cases. The HN discussion covers the nuances if you want the full debate.

How to set up an MCP server

Two ways: the CLI command or editing the config file directly.

Method 1: CLI

# Add a server (stdio transport, runs locally)

claude mcp add playwright -- npx @anthropic-ai/mcp-playwright

# Add with environment variables

claude mcp add firecrawl -e FIRECRAWL_API_KEY=your-key -- npx -y firecrawl-mcp

# Add a remote server (HTTP transport)

claude mcp add --transport http my-server https://my-server.example.com/mcp

# List your servers

claude mcp list

# Remove a server

claude mcp remove playwrightMethod 2: Edit the config file

For complex setups, edit the config file directly. Faster when you're adding multiple servers or copying configs between machines.

{

"mcpServers": {

"playwright": {

"command": "npx",

"args": ["@anthropic-ai/mcp-playwright"],

"type": "stdio"

},

"firecrawl": {

"command": "npx",

"args": ["-y", "firecrawl-mcp"],

"type": "stdio",

"env": {

"FIRECRAWL_API_KEY": "your-key-here"

}

}

}

}Restart Claude Code after adding servers (/quit and relaunch) for changes to take effect.

Verify it works

After restarting, run claude mcp list to confirm your servers are connected. Then try using a tool from the server. For Playwright: "take a screenshot of https://example.com". If it works, you're set.

Three config scopes

Claude Code supports three levels of configuration:

User (

~/.claude.json): Available in every project. Good for servers you always want.Project (

.claude/settings.json): Scoped to that directory. Committed to git so your team gets the same setup.Local project (

.claude/settings.local.json): Same scope as project, but gitignored. This is the right place for API keys and credentials.

Build your own MCP server

The real value of MCP is wrapping your own tools so Claude Code can use them. Internal APIs, custom scripts, company-specific workflows.

Here's a minimal server that wraps an internal deploy API:

from mcp.server.fastmcp import FastMCP

import os

mcp = FastMCP("my-internal-tool")

@mcp.tool()

async def get_deploy_status(service: str) -> str:

"""Check the deploy status for a service.

Args:

service: Name of the service (e.g. api, web, worker)

"""

import httpx

async with httpx.AsyncClient() as client:

resp = await client.get(

f"https://internal.yourcompany.com/deploys/{service}",

headers={"Authorization": f"Bearer {os.environ['DEPLOY_TOKEN']}"}

)

data = resp.json()

return f"{service}: {data['status']} (deployed {data['last_deploy']})"The @mcp.tool() decorator turns a Python function into an MCP tool. The docstring becomes the tool description the model reads. The type hints become the parameter schema. Connect it to Claude Code with:

claude mcp add my-tool -- uv run server.pyThings I've learned building these:

Keep the tool count small, because every tool costs ~150 tokens per turn whether you use it or not. Three focused tools cost 450 tokens. Thirty tools cost 4,500. Build what you need, not what might be useful someday.

Write clear docstrings. The model reads your tool descriptions to decide when and how to call them. Vague descriptions lead to wrong tool calls. Be specific about what the tool does, what the parameters mean, and when to use it vs other tools.

Don't print to stdout for stdio servers. This breaks the communication channel between Claude Code and your server. Use

stderror a logging library instead. This trips up everyone building their first server.

The MCP SDK supports both Python and TypeScript. The official docs have a step-by-step tutorial if you want the full walkthrough.

Common gotchas

Adding or removing an MCP server mid-session breaks your prompt cache. Claude Code caches your setup to avoid reprocessing it every turn. Change the server list, and it has to rebuild that cache from scratch. That single turn can cost 5x normal. Set up your servers before you start working.

More servers doesn't mean better. Each server adds overhead to every turn, whether you use it that turn or not. Start with one or two for things you actually need.

Put credentials in .claude/settings.local.json, not .claude/settings.json. The local config is gitignored. The regular project config isn't.

Server startup failures are quiet. If an MCP server fails to start, Claude Code won't always surface the error. Run claude mcp list to check your connections.

MCP earns its cost when you use it for things CLIs can't do: browser automation, live documentation, internal tools. For everything else, Bash is free. Start there.